Computer vision for sports lacks datasets and models that target pole sports, an activity marked by self-occlusion, body inversions, and sustained contact with the pole. We address this gap by introducing Pole-Arina, a curated, privacy-preserving dataset for markerless analysis of static pole tricks.

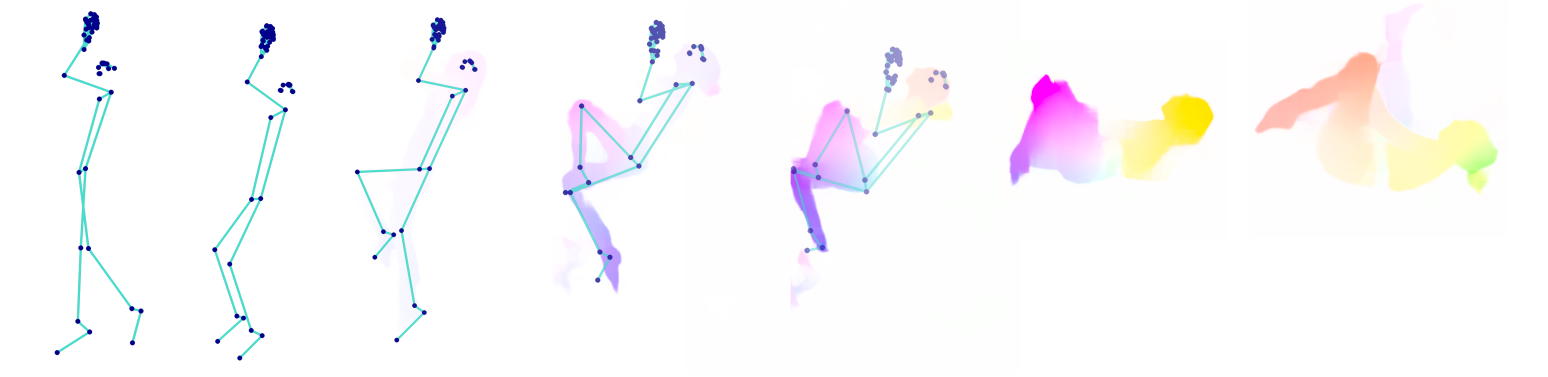

The collection comprises 836 videos from 58 participants and provides two appearance-suppressing modalities derived from the recordings: pose skeleton sequences (2D joints) and dense optical flow. We annotate each clip with both per-video trick labels and per-frame labels that capture temporal structure, enabling evaluation of clip-level and frame-wise recognition under privacy constraints.

As reference baselines, we benchmark lightweight temporal models across all benchmark settings and analyze common confusions and imbalance effects. In addition, we provide a geometry-aware analysis module that measures trick-specific body orientation, joint alignments, and proximities to produce interpretable overlays and actionable feedback.

@inproceedings{polearina2026,

title = {Pole-Arina: A Privacy-Preserving Dataset and Benchmark for Static Pole Tricks},

author = {Diana Marin and Katharina Scheucher and Peter Kán},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) Workshops},

month = {June},

year = {2026},

}